由于家里人一直喜欢追剧,却始终找不到稳定好用的 RSS 追剧源,只能到免费分享网站手动找资源再下载,过程既麻烦又费时。于是我便萌生了一个想法:把这些网站的内容转换成 RSS 订阅源,再配合 qBittorrent 实现自动下载,彻底解放双手。

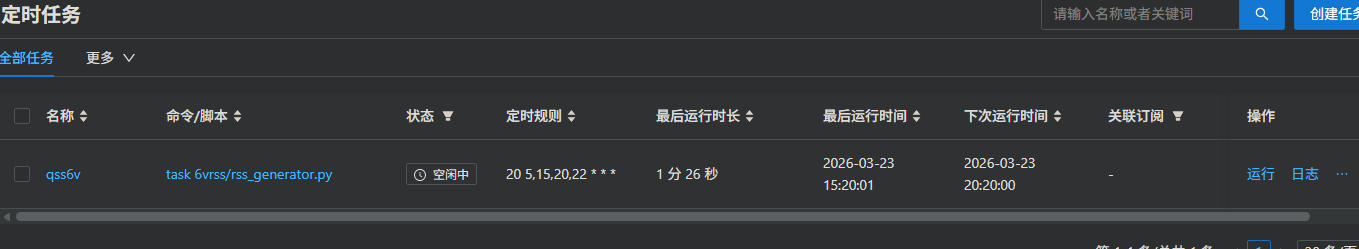

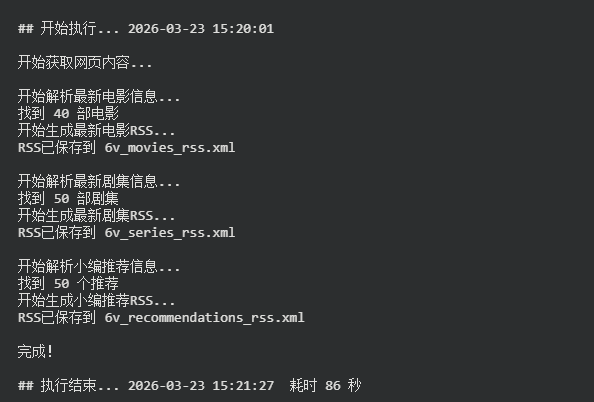

实现思路大致如下:每天定时爬取分享网站的最新剧集更新,将其转换成 rss.xml 订阅源;通过青龙面板配置定时任务,实现自动化运行;最后在服务器上搭建一个 HTTP 服务,把生成的订阅地址添加到 qBittorrent 中即可完成自动下载。

整个流程跑通之后,家里的追剧体验提升了不少。原本需要每天手动翻网站、找链接、等下载,现在只需在 qBittorrent 中设置好 RSS 订阅规则。

HTTP 服务部分则相对轻量,我用 Nginx 搭配简单的静态目录,将生成的 rss.xml 文件暴露出来。只要保持文件路径固定,qBittorrent 中的订阅地址就不用频繁改动。

如果你也有类似的追剧需求,这个方案或许值得一试。它不仅能用于电视剧资源,也可以拓展到动漫、或者爬取整个网站做个资源搜索库。

青龙运行效果如下:

qBittorrent 运行效果如下:

代码如下:

import requests

from bs4 import BeautifulSoup

import datetime

import xml.etree.ElementTree as ET

import re

def fetch_page(url):

"""获取网页内容"""

try:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

response.encoding = response.apparent_encoding

return response.text

except Exception as e:

print(f"获取网页失败: {e}")

return None

def extract_magnet_link(movie_url):

"""从电影详情页面提取磁力链接"""

try:

html_content = fetch_page(movie_url)

if not html_content:

return []

soup = BeautifulSoup(html_content, 'html.parser')

# 查找包含磁力链接的a标签

magnet_links = []

for link in soup.find_all('a', href=lambda x: x and x.startswith('magnet:')):

magnet_links.append({

'link': link.get('href'),

'text': link.get_text(strip=True)

})

return magnet_links

except Exception as e:

print(f"提取磁力链接失败: {e}")

return []

def parse_tab_content(html_content, tab_index=0):

"""解析指定标签的内容

tab_index: 0=最新电影, 1=最新剧集, 2=小编推荐

"""

items = []

soup = BeautifulSoup(html_content, 'html.parser')

# 查找标签内容容器

tab_content = soup.find('div', id='tab-content')

if tab_content:

# 找到所有ul元素,包括带有hide类的

all_lists = tab_content.find_all('ul')

# 确保索引有效

if 0 <= tab_index < len(all_lists):

target_list = all_lists[tab_index]

# 查找所有li元素

list_items = target_list.find_all('li')

for item in list_items:

# 提取a标签

a_tag = item.find('a')

if a_tag:

# 提取标题

title = a_tag.get_text(strip=True)

if not title:

continue

# 提取链接

link = a_tag.get('href')

if link:

# 确保链接是完整的

if not link.startswith('http'):

link = 'https://www.xb6v.com' + link

# 提取磁力链接

magnet_links = extract_magnet_link(link)

# 构建描述,包含所有磁力链接

description = title

if magnet_links:

description += "\n磁力链接:"

for i, magnet in enumerate(magnet_links):

description += f"\n{i+1}. {magnet['text']}: {magnet['link']}"

items.append({

'title': title,

'link': link,

'description': description,

'magnet_links': magnet_links

})

# 限制最多50个项目

return items[:50]

def parse_movies(html_content):

"""解析最新电影信息"""

return parse_tab_content(html_content, 0)

def parse_series(html_content):

"""解析最新剧集信息"""

return parse_tab_content(html_content, 1)

def parse_recommendations(html_content):

"""解析小编推荐信息"""

return parse_tab_content(html_content, 2)

def generate_rss(movies, feed_title, feed_link, feed_description):

"""生成RSS XML"""

# 创建根元素

rss = ET.Element('rss')

rss.set('version', '2.0')

rss.set('xmlns:atom', 'http://www.w3.org/2005/Atom')

# 创建channel元素

channel = ET.SubElement(rss, 'channel')

# 添加channel子元素

ET.SubElement(channel, 'title').text = feed_title

ET.SubElement(channel, 'link').text = feed_link

ET.SubElement(channel, 'description').text = feed_description

# 添加atom:link

atom_link = ET.SubElement(channel, '{http://www.w3.org/2005/Atom}link')

atom_link.set('href', feed_link)

atom_link.set('rel', 'self')

atom_link.set('type', 'application/rss+xml')

ET.SubElement(channel, 'language').text = 'zh-cn'

ET.SubElement(channel, 'lastBuildDate').text = datetime.datetime.now(datetime.timezone.utc).strftime('%a, %d %b %Y %H:%M:%S +0800')

ET.SubElement(channel, 'ttl').text = '60'

# 添加电影项目

for movie in movies:

# 只处理有磁力链接的项目

if movie['magnet_links']:

for magnet in movie['magnet_links']:

item = ET.SubElement(channel, 'item')

ET.SubElement(item, 'title').text = movie['title']

ET.SubElement(item, 'link').text = magnet['link']

# 添加guid

guid = ET.SubElement(item, 'guid')

guid.set('isPermaLink', 'false')

# 使用磁力链接的hash作为guid

import hashlib

magnet_hash = hashlib.md5(magnet['link'].encode()).hexdigest()

guid.text = magnet_hash

ET.SubElement(item, 'pubDate').text = datetime.datetime.now(datetime.timezone.utc).strftime('%a, %d %b %Y %H:%M:%S +0800')

# 添加enclosure

enclosure = ET.SubElement(item, 'enclosure')

enclosure.set('url', magnet['link'])

enclosure.set('type', 'application/x-bittorrent')

# 生成XML字符串

return ET.tostring(rss, encoding='utf-8', xml_declaration=True).decode('utf-8')

def save_rss(rss_content, filename):

"""保存RSS到文件"""

try:

with open(filename, 'w', encoding='utf-8') as f:

f.write(rss_content)

print(f"RSS已保存到 {filename}")

except Exception as e:

print(f"保存RSS失败: {e}")

def main():

"""主函数"""

url = 'https://www.xb6v.com/qian50m.html'

print("开始获取网页内容...")

html_content = fetch_page(url)

if not html_content:

print("无法获取网页内容,程序退出")

return

# 处理最新电影

print("\n开始解析最新电影信息...")

movies = parse_movies(html_content)

if movies:

print(f"找到 {len(movies)} 部电影")

feed_title = '6v电影 - 最新电影'

feed_link = url

feed_description = '6v电影网站最新更新的电影'

rss_filename = '6v_movies_rss.xml'

print("开始生成最新电影RSS...")

rss_content = generate_rss(movies, feed_title, feed_link, feed_description)

save_rss(rss_content, rss_filename)

else:

print("未找到最新电影信息")

# 处理最新剧集

print("\n开始解析最新剧集信息...")

series = parse_series(html_content)

if series:

print(f"找到 {len(series)} 部剧集")

feed_title = '6v电影 - 最新剧集'

feed_link = url

feed_description = '6v电影网站最新更新的剧集'

rss_filename = '6v_series_rss.xml'

print("开始生成最新剧集RSS...")

rss_content = generate_rss(series, feed_title, feed_link, feed_description)

save_rss(rss_content, rss_filename)

else:

print("未找到最新剧集信息")

# 处理小编推荐

print("\n开始解析小编推荐信息...")

recommendations = parse_recommendations(html_content)

if recommendations:

print(f"找到 {len(recommendations)} 个推荐")

feed_title = '6v电影 - 小编推荐'

feed_link = url

feed_description = '6v电影网站小编推荐的内容'

rss_filename = '6v_recommendations_rss.xml'

print("开始生成小编推荐RSS...")

rss_content = generate_rss(recommendations, feed_title, feed_link, feed_description)

save_rss(rss_content, rss_filename)

else:

print("未找到小编推荐信息")

print("\n完成!")

if __name__ == "__main__":

main()